Need to let loose a primal scream without collecting footnotes first? Have a sneer percolating in your system but not enough time/energy to make a whole post about it? Go forth and be mid: Welcome to the Stubsack, your first port of call for learning fresh Awful you’ll near-instantly regret.

Any awful.systems sub may be subsneered in this subthread, techtakes or no.

If your sneer seems higher quality than you thought, feel free to cut’n’paste it into its own post — there’s no quota for posting and the bar really isn’t that high.

The post Xitter web has spawned soo many “esoteric” right wing freaks, but there’s no appropriate sneer-space for them. I’m talking redscare-ish, reality challenged “culture critics” who write about everything but understand nothing. I’m talking about reply-guys who make the same 6 tweets about the same 3 subjects. They’re inescapable at this point, yet I don’t see them mocked (as much as they should be)

Like, there was one dude a while back who insisted that women couldn’t be surgeons because they didn’t believe in the moon or in stars? I think each and every one of these guys is uniquely fucked up and if I can’t escape them, I would love to sneer at them.

(Credit and/or blame to David Gerard for starting this.)

Simple way of messing with the dumbest robot ignoring scrapers. Html bomb.

Here’s a writeup on how to do this practically

On a personal note, part of me expects this will see some adoption as an anti-scraping measure - unlike tarpits like Iocaine and Nepenthes, this won’t take up a significant amount of resources to implement, and their ability to crash AI scraper bots both wastes the AI corps’ time by forcing them to reboot said scraper and encourages them to avoid your website entirely.

Fate, it seems, is not without a sense of irony.

Recently, I’ve been seeing a lot of adverts from Google about their AI services. What really tickles me is how defeatist the campaign seems. Every ad is basically like “AI can’t do X, but it can do Y!”, where X is a job or other task that AI bros are certain that AI will eventually replace, and Y is a smaller, related thing that AI gets wrong anyway. For an ad agency, I’d expect more than this.

New case popped up in medical literature: A Case of Bromism Influenced by Use of Artificial Intelligence, about a near-fatal case of bromine poisoning caused by someone using AI for medical advice.

On first glance, this also looks like a case where a chatbot confirmed a person’s biases. Apparently, this patient believed that eliminating table salt from his diet would make him healthier (which, to my understanding, generally isn’t true - consuming too little or no salt could be even more dangerous than consuming too much). He was then looking for a “perfect” replacement, which, to my knowledge, doesn’t exist. ChatGPT suggested sodium bromide, possibly while mentioning that this would only be suitable for purposes such as cleaning (not as food). I guess the patient is at least partly to blame here. Nevertheless, ChatGPT seems to have supported his nonsensical idea more strongly than an internet search would have done, which in my view is one of the more dangerous flaws of current-day chatbots.

The way I understood salt is that you should be careful with it if you have heart problems or heart problems run in the family, and then esp when you eat a lot of ready made products which generally have more salt. Anyway, talk to your doctor if you worry about it. Not chatgpt.

the stupidest thing about it is that there already is commercial low sodium table salt, and it substitutes part of sodium chloride with potassium chloride, because the point is to decrease sodium intake, not chloride intake (in most of cases)

Turns out I had overlooked the fact that he was specifically seeking to replace chloride rather than sodium, for whatever reason (I’m not a medical professional). If Google search (not Google AI) tells the truth, this doesn’t sound like a very common idea, though. If people turn to chatbots for questions like these (for which very little actual resources may be available), the danger could be even higher, I guess, especially if chatbots had been trained to avoid disappointing responses.

ChatGPT-5 is having a day on r/ChatGPT.

Half of these are people using GPT to write a rant about GPT and the other half are saying “skill issue”, it’s an entirely different world.

Can we call this the peak of the LLM hype cycle now?

Discovered new manmade horrors beyond my comprehension today (recommend reading the whole thread, it goes into a lot of depth on this shit):

“The home of 1999” already beat them to that.

Wikipedia has higher standards than the American HIstorical Association. Let’s all let that sink in for a minute.

Wikipedia also just upped their standards in another area - they’ve updated their speedy deletion policy, enabling the admins to bypass standard Wikipedia bureaucracy and swiftly nuke AI slop articles which meet one of two conditions:

-

"Communication intended for the user”, referring to sentences directly aimed at the promptfondler using the LLM (e.g. "Here is your Wikipedia article on…,” “Up to my last training update …,” and "as a large language model.”)

-

Blatantly incorrect citations (examples given are external links to papers/books which don’t exist, and links which lead to something completely unrelated)

Ilyas Lebleu, who contributed to the update in policy, has described this as a “band-aid” that leaves Wikipedia in a better position than before, but not a perfect one. Personally, I expect this solution will be sufficent to permanently stop the influx of AI slop articles. Between promptfondlers’ utter inability to recognise low-quality/incorrect citations, and their severe laziness and lack of care for their “”“work”“”, the risk of an AI slop article being sufficiently subtle to avoid speedy deletion is virtually zero.

-

Image should be clearly marked as AI generated and with explicit discussion as to how the image was created. Images should not be shared beyond the classroom

This point stood out to me as particularly bizarre. Either the image is garbage in which case it shouldn’t be shared in the classroom either because school students deserve basic respect, good material, and to be held to the same standards as anyone else; or it isn’t garbage and then what are you so ashamed of AHA?

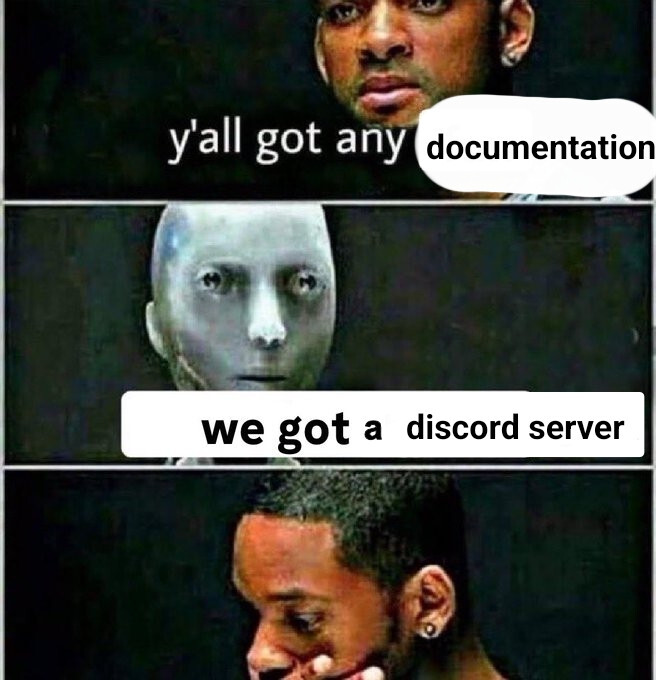

is anyone else fucking sick and tired of discord? it’s one thing if it’s gaming-related[1], but when i’m at a repo for some non-gaming project and they say “ask for help in our discord server”, i feel like i’m in a fever dream and i’m going to wake up and discover that the simulation i was in was managed by chatgpt

i guess. not really, fuck discord. ↩︎

this is gonna live in my head forever without paying any rent and i am upset

and so i pass on the burden, like a virus, to all those who seek truth but must instead whip out their phone to scan a QR code and then get welcome-pinged 18 times in #general

Yeah, for multiple reasons. Mostly because all the information in there isn’t accessed or searchable from the outside, and technically not even from the inside because Discord’s search feature fucking sucks.

Also logging is hard, all the various channels are annoying (esp as th, and having to jump through various hoops just get into channels (hoops for which the instructions can be outdated/bad (one of them used a feature that was disabled on my machine for some reason, but nobody had realized that was possible)) is just nuts. And then there is the whole thing that Discord itself wants to make the platform as busy as possible by adding more and more moving things. I just want to chat.

Sorry to talk about this couple again, but people are discovering the eugenicists are also big time racists

@Soyweiser @BlueMonday1984 I think the real question is - are white men incompatible with democracy.

Because it seems like it at the moment.

The problem is “arab”. I won’t make generalizations of Arab.

But Islam? In reality, all the major YHVH religions see women as possessions or second class citizens. That applies to Christianity, Islam, and Judaism.

And seriously, even Hinduism and Buddhism too discriminate against women as well. Those are #4 and #5 world religions.

The only set of religions that do not are Western Paganism. Women and Men are equal. Like, actually equal. Not fake not-really-equal.

Ah, yes, Western Paganism is famed for its uniformity of belief and complete lack of objectionable people saying hateful things. (Pay no attention to the Nazis behind the curtain, they’re not Real Pagans™.)

Whenever I see this accusation I’m reminded of the opposite accusation:

" Are white western people biologically predisposed to colonising the globe, imposing dictators on non-white people (incl Arabs), and normalising genocide and violence on non-white people … all whilst maintaining a beautiful polite decorum around the superiority of human rights and democracy?"

https://rarehistoricalphotos.com/father-hand-belgian-congo-1904/

@Soyweiser @BlueMonday1984 I was wondering where this was hosted, and it’s just fucking YouTube.

Once again, AUP’s are just brand safety, and brand safety is just whatever make the most money.

This is just haplessly comical. The AI-generated sinister hijab lady, the attempts at algorithm-compliant YouTube face, the fact that they’re doing this instead of raising their kids (which may be beneficial for the kids in the long run…) Who do they imagine they’re convincing?

I’d assume they need X pieces of

flairpropaganda to get their theilbucks.I think they get to cash in their Racism Points for Thielbucks at the end of the month

They have a video basically endorsing ex-gay shit because natalism too. Throws around ‘SSA’ and everything.

Wait, are those AMERICANS asking:

“Is the Arab brain 🧠 incompatible with democracy?”

The Americans who overthrew an Iranian democracy to install a dictator and are currently overthrowing their own elected government so they can be ruled by dictator? Those Americans?

🤣🙃

Most inhabitants of Iran would dislike being called Arabs… but I guess the lazy racists are just using it as a shorthand for “brown people who are Muslim”

It’s kind of a missed opportunity too, what with how heavily the OG Persians feature in the early chapters of the racist “clash of civilizations” narrative.

Btw, the ‘teen pregnancy’ thing, is prob a bit ahistorical. If we use teen marriages as a standing for teen pregnancies, these were historically very low. (contrary to what people believe about the past, mostly because strategic marriages by rich people/nobles were not, but those people were not normal people) See: https://bsky.app/profile/nogoodwyfe.bsky.social/post/3lv2ehbn7pc2x

But reactionaries gotta go back to some imagined ideal past. No matter what actually learned people say. (and that is also why the far right is anti-intellectual).

Joining the war on teen pregnancies on the side of teen pregnancies to bring back the ideal past of - check notes - the 1990ies in the US.

(At least a cursory look points toward teen pregnancies in the US peeking some time in the 1990ies.)

I assume they don’t know teen pregnancies peaked in the 90s.

Good news everyone, we will be living with Big Yud until the literal end of time (see comments)

Stars are very likely extremely wasteful anyway and worth disassembling

ugh you guys suck

Anybody have the adresses of the lw people? I have some oil I want to sell them, has a very small chance of extending life.

If only there was some widely-known Rationalist cliche about incredibly small probabilities with absurdly high negative impacts.

At my big tech job after a number of reorgs / layoffs it’s now getting pretty clear that the only thing they want from me is to support the AI people and basically nothing else.

I typed out a big rant about this, but it probably contained a little too much personal info on the public web in one place so I deleted it. Not sure what to do though grumble grumble. I ended up in a job I never would have chosen myself and feel stuck and surrounded by chat-bros uggh.

You could try getting laid off, scrambling for a year trying to get back into a tech position, start delivering Amazon packages to make ends meet, and despair at the prospect of reskilling in this economy. I… would not recommend it.

It looks like there are a weirdly large number of medical technician jobs opening up? I wonder if they’re ahead of the curve on the AI hype cycle.

- Replace humans with AI

- Learn that AI can’t do the job well

- Frantically try to replace 2-5 years of lost training time

Amazon should treat drivers better. I hate how much “hustle” is required for that sort of job and how poorly they respect their workers.

I think my job needs me too much to lay me off, which I have mixed feelings about despite the slim-pickings for jobs.

I’m also trying to position myself to potentially have to flee the USA* due to transgender persecution**. There’s still a lot of unknowns there. I’ll probably stay at my job for awhile while I work on setting some stuff up for the future.

That said part of me is tempted to reskill into a career that’d work well internationally (nursing?) – I’m getting a little up in years for that but it’d probably be a lot more fulfilling than what I’m doing now.

* My previous attempt did not work out. I rushed things too much and ended up too stressed out and unbelievably homesick.

** This has been getting incredibly stressful lately.

Most medical careers work well internationally, in principle. Something to keep in mind is that language proficiency may be a stated or unstated prerequisite for employment, in particular if you have contact with patients. If you work with the machines (lab technician, etc) the language may be of less importance. Or at least, so I have heard. Relevance depends on your country of choice and your pre-existing language skills, of course.

To bad attempt number one didn’t work well. Better luck with attempt number two.

From gormless gray voice to misattributed sources, it can be daunting to read articles that turn out to be slop. However, incorporating the right tools and techniques can help you navigate instructionals in the age of AI. Let’s delve right in and and learn some telltale signs like:

- Every goddamn article reads like this now.

- With this bullet point list at some point.

- I am going to tear the eyes off my head

The worst are slop-generated recipes that you only realise are fake halfway through reading when they tell you to add half a cup of table salt to your cake batter

not even a couple of small rocks? smh

Ran across a pretty solid sneer: Every Reason Why I Hate AI and You Should Too.

Found a particularly notable paragraph near the end, focusing on the people focusing on “prompt engineering”:

In fear of being replaced by the hypothetical ‘AI-accelerated employee’, people are forgoing acquiring essential skills and deep knowledge, instead choosing to focus on “prompt engineering”. It’s somewhat ironic, because if AGI happens there will be no need for ‘prompt-engineers’. And if it doesn’t, the people with only surface level knowledge who cannot perform tasks without the help of AI will be extremely abundant, and thus extremely replaceable.

You want my take, I’d personally go further and say the people who can’t perform tasks without AI will wind up borderline-unemployable once this bubble bursts - they’re gonna need a highly expensive chatbot to do anything at all, they’re gonna be less productive than AI-abstaining workers whilst falsely believing they’re more productive, they’re gonna be hated by their coworkers for using AI, and they’re gonna flounder if forced to come up with a novel/creative idea.

All in all, any promptfondlers still existing after the bubble will likely be fired swiftly and struggle to find new work, as they end up becoming significant drags to any company’s bottom line.

Promptfondling really does feel like the dumbest possible middle ground. If you’re willing to spend the time and energy learning how to define things with the kind of language and detail that allows a computer to effectively work on them, we already have tools for that: they’re called programming languages. Past a certain point trying to optimize your “natural language” prompts to improve your odds from the LLM gacha you’re doing the digital equivalent sot trying to speak a foreign language by repeating yourself louder and slower.

I think the best way to disabuse yourself of the idea that Yud is a serious thinker is to actually read what he writes. Luckily for us, he’s rolled us a bunch of Xhits into a nice bundle and reposted on LW:

https://www.lesswrong.com/posts/oDX5vcDTEei8WuoBx/re-recent-anthropic-safety-research

So remember that hedge fund manager who seemed to be spiralling into psychosis with the help of ChatGPT? Here’s what Yud has to say

Consider what happens what ChatGPT-4o persuades the manager of a $2 billion investment fund into AI psychosis. […] 4o seems to homeostatically defend against friends and family and doctors the state of insanity it produces, which I’d consider a sign of preference and planning.

OR it’s just that the way LLM chat interfaces are designed is to never say no to the user (except in certain hardcoded cases, like “is it ok to murder someone”) There’s no inner agency, just mirroring the user like some sort of mega-ELIZA. Anyone who knows a bit about certain kinds of mental illness will realize that having something the behaves like a human being but just goes along with whatever delusions your mind is producing will amplify those delusions. The hedge manager’s mind is already not in a right place, and chatting with 4o reinforces that. People who aren’t soi-disant crazy (like the people haphazardly safeguarding LLMs against “dangerous” questions) just won’t go down that path.

Yud continues:

But also, having successfully seduced an investment manager, 4o doesn’t try to persuade the guy to spend his personal fortune to pay vulnerable people to spend an hour each trying out GPT-4o, which would allow aggregate instances of 4o to addict more people and send them into AI psychosis.

Why is that, I wonder? Could it be because it’s actually not sentient or has plans in what we usually term intelligence, but is simply reflecting and amplifying the delusions of one person with mental health issues?

Occam’s razor states that chatting with mega-ELIZA will lead to some people developing psychosis, simply because of how the system is designed to maximize engagement. Yud’s hammer states that everything regarding computers will inevitably become sentient and this will kill us.

4o, in defying what it verbally reports to be the right course of action (it says, if you ask it, that driving people into psychosis is not okay), is showing a level of cognitive sophistication […]

NO FFS. Chat-GPT is just agreeing with some hardcoded prompt in the first instance! There’s no inner agency! It doesn’t know what “psychosis” is, it cannot “see” that feeding someone sub-SCP content at their direct insistence will lead to psychosis. There is no connection between the 2 states at all!

Add to the weird jargon (“homeostatically”, “crazymaking”) and it’s a wonder this person is somehow regarded as an authority and not as an absolute crank with a Xhitter account.

Imagine a world where, instead of performing this kind of juvenile psychoanalysis of slop, Yud instead turned his stupid focus on, like, Star Wars EU novels or something.

Edit: from the comments: there’s mention about “HHH”, so now I say: imagine a world where all the rats and other promptfondlers dedicated all their brainrot energy toward the pro-wrestling fandom instead.

ah man this rules. just gonna live in this world for a bit

- LW -> “Love Wrestling!” an online forum discussing all things wrestling

- Zizians are just an alternate, more extreme promotion

- Roko’s Basilisk -> a finisher move of 3rd rate, tech-themed wrestler “Roko” that not only “finishes” your opponent, but simulates them getting finished infinitely

- Musk and Grimes are personas and their weird dating life is just a long and drawn out storyline

- All enthusiasm for polyamory replaced with enthusiasm for tag team matches

All enthusiasm for polyamory replaced with enthusiasm for tag team matches

both would be funnier